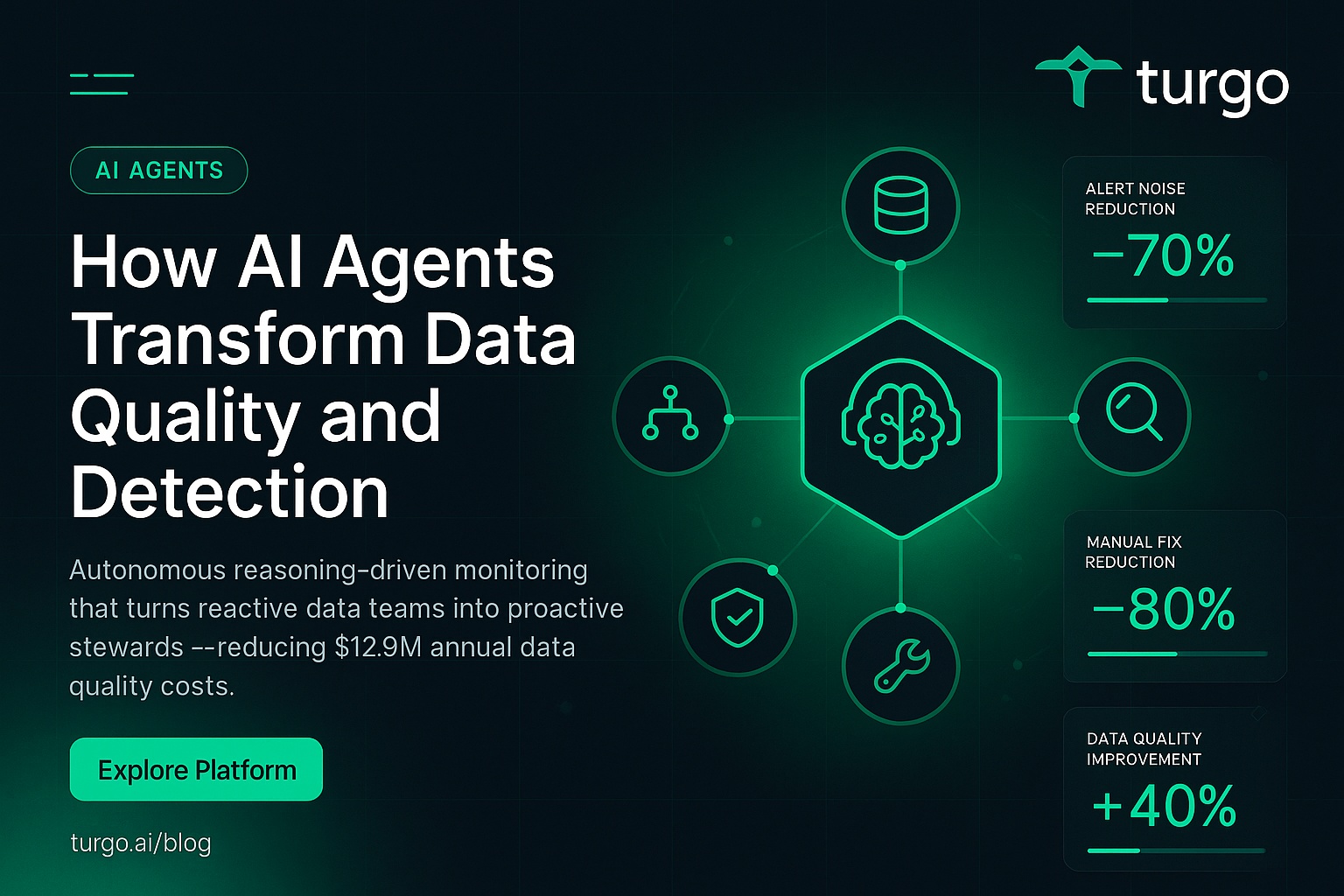

Traditional data quality approaches struggle with the explosion of data volumes, where rule-based systems fail to adapt to evolving patterns, leading to undetected anomalies and significant business costs. AI agents from Turgo.ai redefine this landscape by introducing autonomous, reasoning-driven monitoring that proactively identifies and resolves issues, turning reactive data teams into proactive stewards.

Enterprises face an average cost of $12.9 million annually from poor data quality, but Turgo.ai’s AI agents for data quality and anomaly detection shift the paradigm, leveraging advanced reasoning and tool integration to ensure reliable data pipelines at scale.

Why Are Traditional Data Quality Approaches Failing?

Traditional data quality methods rely on static rules that cannot scale with modern data velocity and complexity, missing subtle anomalies as patterns evolve. They require constant manual tuning, leading to alert fatigue and overlooked issues in high-volume environments.

For explanation, rule-based systems detect known problems like null values but ignore context-dependent drifts, such as seasonal shifts in sales data, resulting in false negatives that propagate downstream errors.

A retail business using legacy tools experienced weekly inventory discrepancies, costing thousands in overstock; switching to AI agents reduced these incidents by automating adaptive checks across suppliers.

Learn more about Turgo.ai’s solutions at https://turgo.ai/solutions.

What Are AI Agents and Why Do They Matter for Data Teams?

AI agents are autonomous systems powered by large language models with reasoning, planning, memory, and tool-calling abilities, enabling them to handle complex data tasks beyond simple automation. They matter for data teams because they shift from reactive alerting to proactive stewardship, continuously learning from data streams.

Unlike traditional ML models that predict statically, AI agents like those in Turgo.ai dynamically query databases, analyze lineage, and self-improve, adapting to new anomaly types without recoding.

A financial firm used AI agents to monitor transaction streams, catching fraud patterns that rules missed, saving millions in potential losses.

Explore Turgo.ai’s approach to intelligent data operations at https://turgo.ai/about.

How Do AI Agents Improve Data Quality in Enterprise Pipelines?

AI agents improve data quality by autonomously profiling datasets, validating schemas in real-time, and remediating issues through integrated workflows. They use contextual reasoning to ensure consistency across pipelines.

This involves multi-step processes: ingesting metadata, detecting drifts, and applying fixes, far surpassing manual checks in speed and accuracy.

In manufacturing, an IoT pipeline saw quality scores rise 40% as agents profiled sensor data daily, preventing production halts.

Discover Turgo.ai’s data quality solutions powered by AI agents at https://turgo.ai/platform.

What Is the Difference Between AI Anomaly Detection and Rule-Based Monitoring?

AI anomaly detection learns from historical baselines to flag deviations dynamically, while rule-based monitoring uses fixed thresholds that fail on novel patterns. AI agents adapt via continuous learning loops, reducing false positives.

Rule-based systems trigger on predefined conditions like volume spikes, but AI agents incorporate context, such as business calendars, for precise classification.

A healthcare provider reduced alert noise by 70% with AI agents classifying EHR anomalies, focusing experts on true risks.

Turgo.ai’s AI agents for data quality excel here; see features at https://turgo.ai/features.

Can AI Agents Automatically Fix Data Quality Issues?

Yes, advanced AI agents implement self-healing pipelines by triggering corrections like reprocessing records or schema adjustments, with human oversight for critical actions. This automates remediation while maintaining control.

They reason over issues—e.g., detecting duplicates—and call tools to deduplicate or alert, learning from outcomes to prevent recurrence.

An e-commerce platform used self-healing agents to reconcile inventory data nightly, cutting manual fixes by 80%.

Check Turgo.ai’s resources for implementation guides at https://turgo.ai/resources.

How Do AI Agents Detect Anomalies in Real-Time Data Streams?

AI agents detect anomalies in real-time by establishing behavioral baselines, monitoring streams for deviations, and classifying via pattern recognition and context. They outperform static methods by adapting thresholds on-the-fly.

Using memory of past incidents, they flag spikes, drifts, or semantic issues, routing for review as in Domo’s classification agent.

A logistics company monitored shipment data streams, detecting delays instantly and rerouting automatically.

Read latest insights on AI and data quality at https://turgo.ai/blog.

What Types of Data Anomalies Can AI Agents Detect?

AI agents detect volume changes, schema drifts, distribution shifts, duplicates, null patterns, referential violations, and semantic inconsistencies requiring reasoning. Their tool integration covers structured and unstructured data.

Unlike basic detectors, they use LLM reasoning for cross-field logic, as in agent-centric text anomaly benchmarks.

In finance, agents spotted fraudulent patterns in transaction volumes and semantics, preventing breaches.

See how organizations use Turgo.ai in case studies at https://turgo.ai/case-studies.

How Do AI Agents Integrate with Existing Data Infrastructure?

AI agents integrate via APIs, database connectors, and orchestration hooks with tools like dbt, Airflow, or Spark, embedding into DAGs or triggering on test failures. This ensures seamless operation in current stacks.

They call tools dynamically for queries or transformations, maintaining pipeline flow.

A tech firm integrated agents into Airflow, automating Spark job validations and cutting downtime.

View Turgo.ai pricing for enterprise integrations at https://turgo.ai/pricing.

What Key Capabilities Should You Look for in AI Agents for Data Quality?

Look for multi-source ingestion, metadata reasoning, tool calling, incident memory, and human-in-the-loop escalation in AI agents. These enable end-to-end observability and adaptability.

Turgo.ai emphasizes continuous learning from human feedback, like anomaly classification loops.

A compliance team selected agents with strong lineage tracing, ensuring audit-ready data governance.

Explore Turgo.ai’s agent capabilities at https://turgo.ai/features.

How Do You Build AI Agents for Data Anomaly Detection?

Building AI agents involves selecting foundation models, designing workflows for pipelines, integrating infrastructure, and adding guardrails for observability. Frameworks like those for agentic AI support production deployment.

Start with baselines, add anomaly flagging, and iterate via learning loops.

A DevOps team built agents using open tools, reducing MTTR in IT anomalies.

Access data quality resources and guides at https://turgo.ai/resources.

What Is AI Observability and Its Role in Agentic Data Systems?

AI observability tracks model performance, semantic drifts, and anomalies in agent outputs, ensuring reliable operations. It bridges traditional monitoring gaps for LLM-powered data tasks.

Components include trace maps and real-time alerts for biases or leaks.

In AI sales agents, observability prevented data leaks, maintaining trust.

Turgo.ai integrates this for intelligent data observability; visit https://turgo.ai/platform.

How Do Multi-Agent Systems Enhance Data Governance?

Multi-agent systems assign specialized roles—like quality monitoring or lineage tracking—coordinating via orchestration for comprehensive governance. This scales beyond single-agent limits.

Agents collaborate on complex tasks, such as root cause analysis across sources.

A bank used multi-agents for end-to-end transaction governance, boosting compliance.

Learn about Turgo.ai’s approach at https://turgo.ai/about.

What Are Self-Healing Data Pipelines with AI Agents?

Self-healing pipelines use AI agents to detect issues, hypothesize causes, and apply fixes autonomously, like reprocessing or alerting. This minimizes downtime.

Drawing from IT anomaly resolution, agents curate evidence for targeted repairs.

Manufacturing sensors benefited, auto-correcting IoT drifts to avoid defects.

See Turgo.ai case studies at https://turgo.ai/case-studies.

AI Agents vs. Traditional Methods: A Comparison

| Aspect | Rule-Based | ML-Based | AI Agent-Based |

|——–|————|———-|—————|

| Adaptability | Low (static rules) | Medium (learns patterns) | High (reasoning + memory) |

| False Positives | High | Medium | Low (contextual) |

| Scalability | Poor for volume | Good | Excellent (autonomous) |

| Remediation | Manual | Semi-auto | Self-healing |

| Cost | Low initial, high maintenance | Medium | High ROI long-term |

AI agents excel in dynamic environments; Turgo.ai offers this edge.

What Is the Future of AI Agents in Data Quality?

The future features multi-agent collaboration, agentic RAG for compliance, and autonomous SLAs, evolving data ops into intelligent systems. Production-ready agents will dominate.

As in ATAD benchmarks, they co-evolve with models for robust detection.

Enterprises adopting early gain competitive data intelligence.

Stay updated at https://turgo.ai/blog.

What Is the ROI of AI Agents for Data Quality?

Deploying AI agents yields 60-80% less investigation time, fewer incidents, and faster insights, offsetting $12.9M annual poor data costs. Gartner highlights this high ROI.

Savings compound via automation and learning.

A firm recouped investment in months through reduced downtime.

Schedule a demo with Turgo.ai at https://turgo.ai/contact.

FAQs

What are AI agents for data quality, and how do they work?

AI agents for data quality are autonomous systems that use large language models combined with tools, memory, and reasoning to continuously monitor, validate, and remediate data issues across your pipelines — without requiring manual rule configuration.

How is AI-powered anomaly detection different from traditional threshold-based monitoring?

Traditional monitoring relies on static rules that break when data patterns shift. AI agents learn from historical patterns, understand context, and adapt their detection criteria dynamically — catching subtle anomalies that rule-based systems miss entirely.

Can AI agents automatically fix data quality issues they detect?

Yes — advanced AI agents can implement self-healing workflows. When they detect a schema drift or data anomaly, they can trigger corrective actions like re-processing records, alerting downstream consumers, or applying transformation rules — all with human-in-the-loop oversight for critical decisions.

What types of data anomalies can AI agents detect?

AI agents can detect a wide range of anomalies including volume spikes/drops, schema changes, distribution drift, null value patterns, duplicate records, referential integrity violations, and even semantic inconsistencies that require contextual understanding.

How do AI agents integrate with existing data infrastructure like dbt, Airflow, or Spark?

Modern AI agents use tool-calling capabilities to connect with your existing stack via APIs, database connectors, and orchestration hooks. They can be embedded within your Airflow DAGs, triggered by dbt test failures, or monitor Spark job outputs in real-time.

What is the ROI of deploying AI agents for data quality?

Organizations typically see 60-80% reduction in manual data quality investigation time, significantly fewer production incidents caused by bad data, and faster time-to-insight. The cost of poor data quality averages $12.9M per year per organization (Gartner), making AI agents a high-ROI investment.

Are AI agents for data quality secure for enterprise use?

Yes — enterprise-grade AI agents operate within your security perimeter, support role-based access controls, and can be configured with strict guardrails that prevent unauthorized data access or modifications. Look for solutions with SOC 2 compliance and data residency controls.

How do multi-agent systems improve data governance?

Multi-agent architectures allow specialized agents to handle different aspects of data governance — one agent monitors quality metrics, another manages lineage documentation, a third handles compliance checks — all coordinating through an orchestration layer to provide comprehensive coverage.

How do AI agents reduce false positives in anomaly detection?

AI agents use continuous learning from human feedback and contextual reasoning to refine classifications, minimizing noise by distinguishing true anomalies from benign variations, unlike rigid rules.

What are the best AI agents tools for data quality monitoring in 2026?

Among ai agents tools, Turgo.ai stands out for data-specific anomaly detection, integrating seamlessly with production pipelines for autonomous monitoring and remediation.

Turgo.ai’s AI agents transform data quality from a cost center to a strategic asset, delivering scalable anomaly detection and self-healing capabilities that enterprises need in 2026. By addressing gaps in traditional methods, they enable reliable AI agents in production, reducing risks and unlocking data’s full potential—start with Turgo.ai’s AI-powered data quality platform at https://turgo.ai/.

Leave a comment